Not AI for AI's Sake

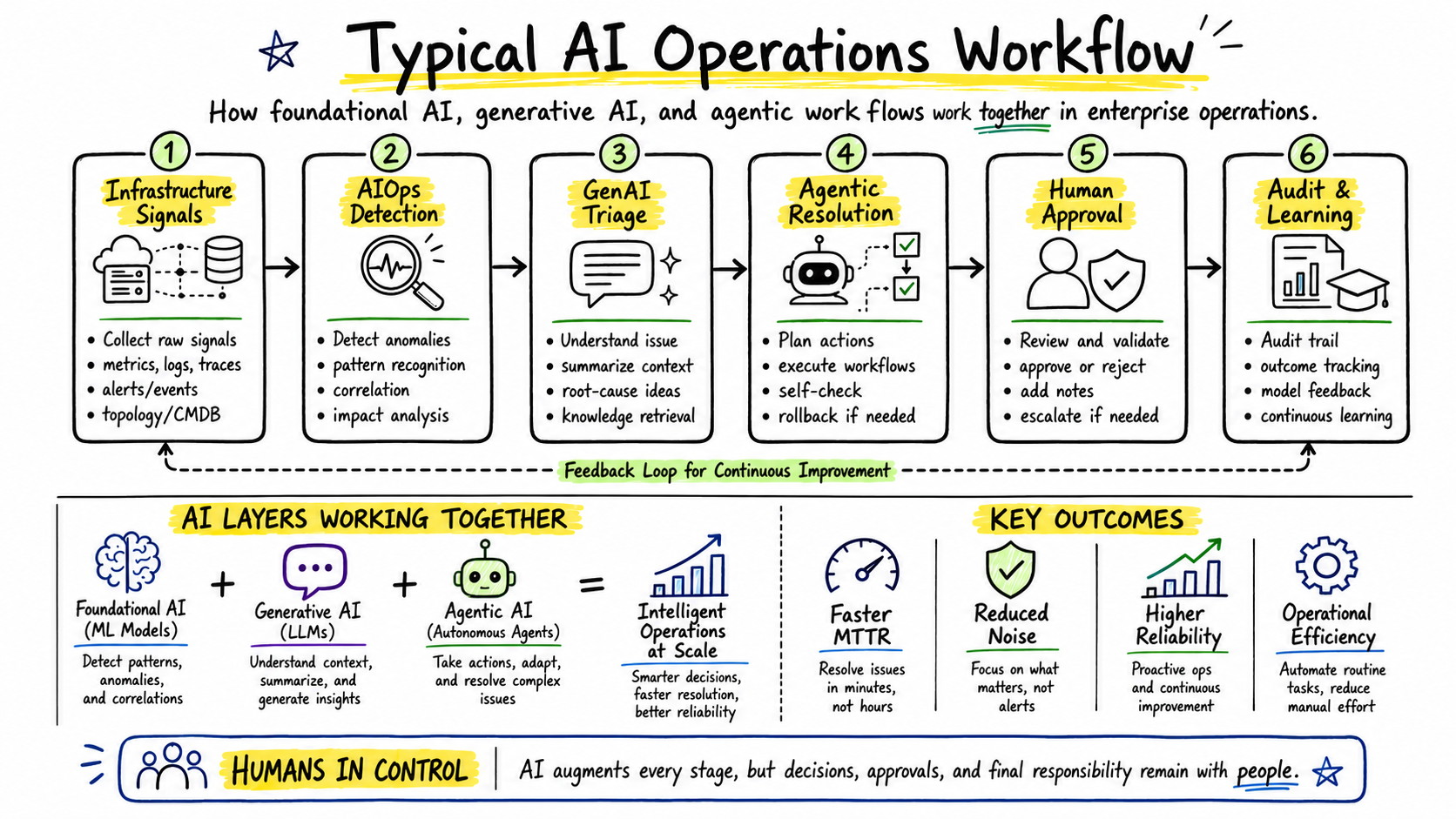

Every AI system I build follows three core principles: it must be safe by design, fully auditable, and deliver measurable business value. AI in operations should enhance human decision-making, not replace human judgment.

AIOps & Intelligent Automation

AI-native operations that learn from your infrastructure patterns to predict issues before they impact users. Incorporates agentic AI workflows and LLMOps patterns for automated signal triage.

- Anomaly detection for metrics and logs

- Predictive scaling based on traffic patterns

- Intelligent alert correlation and noise reduction

- Root cause analysis acceleration

Governed AI & Assistants

Enterprise-grade AI governance ensuring safe, compliant, and controlled AI adoption across development teams.

- Policy-based guardrails for AI assistants

- Data loss prevention in AI workflows

- Usage analytics and compliance reporting

- Safe adoption playbooks and training

Advisory AI Systems

AI that advises rather than acts autonomously. Human-in-the-loop for critical decisions with full transparency.

- Recommendation engines with confidence scores

- Decision support with explainability

- Rollback capabilities on all AI actions

- Complete audit trails for compliance

MLOps & Model Governance

Production-grade machine learning and agentic AI pipelines with proper versioning, monitoring, and governance throughout the model lifecycle. Covers both traditional ML and LLMOps patterns for agentic operations.

- Model registry and version control

- Automated model validation and testing

- Drift detection and performance monitoring

- Bias detection and fairness metrics

Intelligent Automation

Smart automation that learns from patterns and adapts to changing conditions while maintaining safety guardrails.

- Self-healing infrastructure responses

- Automated incident triage and routing

- Intelligent deployment strategies

- Cost optimization recommendations

Conversational AI

Natural language interfaces for platform operations, making complex systems accessible to all team members.

- ChatOps for infrastructure management

- Natural language queries for observability

- Guided troubleshooting assistants

- Knowledge base integration